A Tesla owner blames his vehicle’s full self-driving feature for veering into an oncoming train before he could intervene.

Craig Doty II of Ohio was driving on a highway at night earlier this month when dash cam footage showed his Tesla rapidly approaching a passing train with no signs of slowing down.

He stated that his vehicle was in fully self-driving (FSD) mode at the time and did not slow down even though the train crossed the road, but did not specify the make or model of the car.

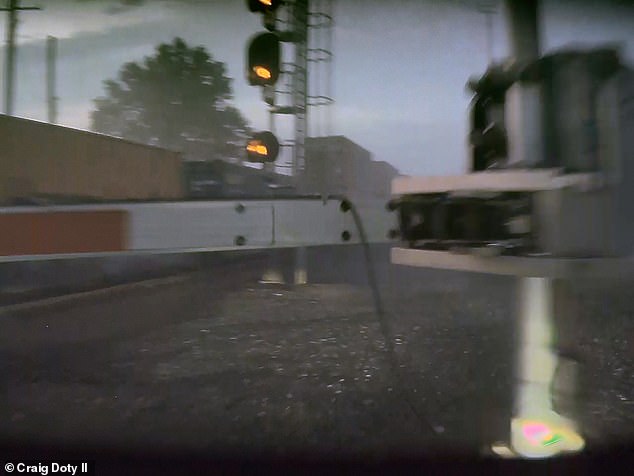

In the video, the driver appears to have been forced to intervene by turning right across the railway crossing signal and stopping a few meters from the moving train.

Tesla has faced numerous lawsuits from owners who claimed that the FSD or Autopilot feature caused them to get into an accident because it failed to stop for another vehicle or swerve into an object, in some cases claiming the lives of the drivers involved.

The Tesla driver claimed his vehicle was in fully self-driving (FSD) mode, but did not slow down as he approached a train crossing the road. In the video, the driver was allegedly forced to swerve off the road to avoid colliding with the train. In the photo: the Tesla vehicle after the near collision.

As of April 2024, the Autopilot systems in Tesla Models Y, X, S and 3 were involved in a total of 17 deaths and 736 accidents since 2019, according to the National Highway Traffic Safety Administration (NHTSA). .

doty reported The issue on the Tesla Motors Club website, saying he has owned the car for less than a year, but “in the last six months, he has twice attempted to drive directly into a passing train while in FSD mode.”

He had reportedly tried to report the incident and find cases similar to his, but he did not find an attorney to take his case because he did not suffer any major injuries, only back pain and a bruise, Doty said.

DailyMail.com has contacted Tesla and Doty for comment.

tesla precautions Drivers object to using the FSD system in low light or bad weather conditions such as rain, snow, direct sunlight and fog, which can “significantly degrade performance.”

This is because the conditions interfere with the operation of the Tesla’s sensors, which include ultrasonic sensors that use high-frequency sound waves to bounce off nearby objects.

It also uses radar systems that produce low frequencies of light to determine if a car is nearby and 360-degree cameras.

These systems collectively collect data about the surrounding area, including road conditions, traffic, and nearby objects, but in low visibility conditions, the systems cannot accurately detect the conditions around them.

Craig Doty II reported that he had owned his Tesla vehicle for less than a year, but that the full self-driving feature had already caused nearly two accidents. In the image: The Tesla approaches the train but does not slow down or stop.

Tesla is facing numerous lawsuits from owners who claimed that the FSD or Autopilot feature caused their accident. Pictured: Craig Doty II’s car runs off the road to avoid the train.

When asked why he had continued to use the FSD system after the first near-collision, Doty said he was confident it would work properly because he hadn’t had any other problems for a while.

“After using the FSD system for a while, you tend to trust that it will work correctly, much like you would with adaptive cruise control,” he said.

“You assume the vehicle will slow down when you approach a slower car in front, until it doesn’t, and suddenly you’re forced to take control.

“This complacency can increase over time because the system typically works as expected, making incidents like this particularly worrying.”

The Tesla manual does warn drivers against relying solely on the FSD function, saying they should keep their hands on the wheel at all times, “be aware of road and surrounding traffic conditions, pay attention to pedestrians and cyclists, and always be prepared to take immediate action.”

Tesla’s Autopilot system has been accused of causing a fiery crash that killed a Colorado man while he was driving home from the golf course, according to a lawsuit filed May 3.

Hans Von Ohain’s family claimed he was using the 2021 Tesla Model 3’s Autopilot system on May 16, 2022, when it swerved to the right off the road, but Erik Rossiter, who was a passenger, said the Driver was very intoxicated at the time of the crash.

Ohain attempted to regain control of the vehicle but was unable to and died when the car hit a tree and burst into flames.

An autopsy report later revealed that he had three times the legal limit of alcohol in his system when he died.

A Florida man also died in 2019 when the Tesla Model 3’s Autopilot failed to brake when a truck turned onto the road, causing the car to slide under the trailer; the man died instantly.

In October last year, Tesla won its first lawsuit over allegations that the Autopilot feature caused the death of a Los Angeles man when the Model 3 left the road and crashed into a palm tree before bursting into flames.

NHTSA investigated accidents associated with the Autopilot feature and said a “weak driver involvement system” contributed to the car accidents.

Autopilot feature “led to foreseeable misuse and preventable accidents,” NHTSA says report he said, adding that the system does not “sufficiently guarantee driver attention and its proper use.”

Tesla issued an over-the-air software update in December for two million vehicles in the US that was supposed to improve the autopilot of the vehicle’s FSD systems, but NHTSA has now suggested that the update probably did not go far enough in light of more accidents.

Elon Musk has not commented on the NHTSA report, but he previously touted the effectiveness of Tesla’s self-driving systems, stating in a 2021 report. mail in X, people who used the autopilot feature were 10 times less likely to be in an accident than the average vehicle.

‘People are dying due to misguided confidence in the capabilities of Tesla’s Autopilot. Even simple measures could improve safety,” said Philip Koopman, an automotive safety researcher and professor of computer engineering at Carnegie Mellon University. CNBC.

“Tesla could automatically restrict the use of Autopilot to intended roads based on map data already in the vehicle,” he continued.

“Tesla could improve monitoring so that drivers can’t become engrossed in their cell phones while Autopilot is in use.”