AI is infiltrating every aspect of our lives, but until now it had drawn a line at the bedroom door. No more.

A new app urges women to take photos of their dates’ penises and upload them for an AI-powered ‘peen check’ to detect possible STIs before having sex, without being able to verify the consent of those photographed or whether the person photographed is over 18 years old.

Tech company HeHealth’s Calmara describes herself as your “tech-savvy best friend for STI checks” and urges users to “take a photo” so her AI can look for “visual signs of STIs.” and tell you in seconds if you are ‘in the clear.’

Although it is innovative (the app struggles with reliability, as the company admits on its website) and for some conditions, it only has a 65 percent accuracy rate, meaning one in three times it gets it wrong.

Experts have also raised the alarm about huge privacy problems. A technological site, Engadgetwrote, “Friends don’t let friends use an AI STI test,” claiming there is no way to ensure consent or secure data storage.

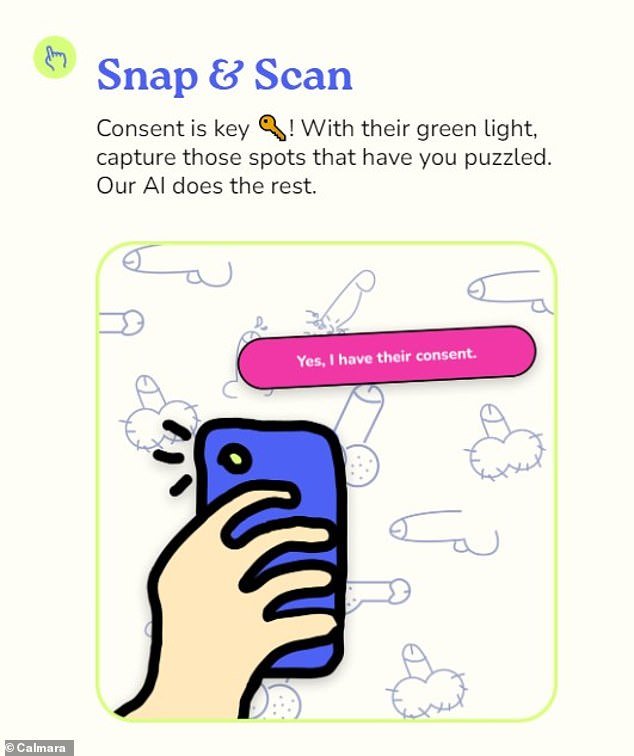

Calmara, launched in the US by technology company HeHealth, describes itself as your “tech-savvy best friend for STI checks.” In this image, Calmara shows the basic process for uploading photos of genitals to their app.

Experts have raised the alarm about huge privacy issues, saying there is no way to ensure consent or secure storage of data, like this mock photo taken of a banana.

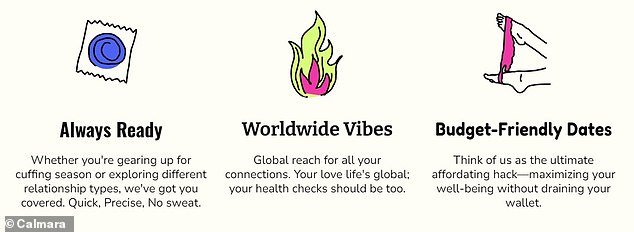

Calmara is free to use and was trained on thousands of penis pics, collected from the public and reviewed by doctors.

Users can download the app, take a photo of their partner’s penis, and upload it with just a few clicks.

The built-in AI then scans the photo and compares it to the image database for possible signs of STIs.

If there are any concerns, it will instruct the user to postpone sexual intercourse and suggest courses of action such as using protection or visiting a doctor; It will not reveal the specific details of the illness it suspects the man has.

If there are no signs of illness, it will give “all clear” to continue.

But on his site, Calmara adds a disclaimer to “consider this more as your first line of defense, not a full fortress.”

He adds: ‘Calmara is an expert at spotting signs that they are out and about, but remember, some STIs play the long game, hiding (asymptomatic) or only appearing weeks after you have been exposed.

‘And yes, there are those who don’t appear on Calmara’s scans. So if Calmara gives you a “Clear!” “Nod, that doesn’t mean you can skip any more checks.”

It also claims that its accuracy varies wildly between 65 and 96 percent across all conditions, with scores varying depending on lighting and skin color.

Basil Donovan, sexual health expert and emeritus professor at UNSW’s Kirby Institute, said The Guardian that technology has a long way to go.

The app requires users to obtain consent from their partners, but there is no way to verify that they have actually done so.

While the app offers “world vibes,” there is no way to guarantee that users are over 18 years old.

The app promises “on-the-spot clarity” and insists it deletes photos “faster than Snapchat”

He said: “Even if you’re in a well-lit clinic and you have a doctor with 30 years of experience… looking at a lesion on someone’s penis, it has pretty weak diagnostic value just by looking at it.”

There are also concerns about data security and privacy.

The app warns that before uploading any image “you must have obtained explicit consent from all people who appear in the images,” but there is no way to check whether a user has actually done so.

There is also no way to verify that users are over 18 years of age.

In the app’s terms and conditions, parent company HeHealth writes: ‘HeHealth Inc. requires all users to affirm that they are of legal age (18 years or older) as part of the account registration process.

“However, HeHealth Inc. does not have the means to verify the age of individuals who access and use the Service.”

Yudara Kularathne founded HeHealth and Calmara after his friend went through an STI scare

Co-founder Mei-Ling Lu said they are “working” on privacy concerns

In addition to accuracy and consent, privacy is another “major concern” for experts.

Calmara promises that the data is stored securely in the US and that they do not collect any identifying information or store the photos.

Thorne Harbor Health chief executive Simon Ruth told The Guardian: “Recent events have shown us how easily private health information can be hacked and disseminated if the technology collecting that information is not backed by rigorous security protocol. of data”.

He added: “If technological advances involve people who have never before taken steps to care for their sexual health and well-being, it is a step in the right direction, but it is not a substitute for regular STI testing.”

Calmara did not immediately respond to DailyMail.com’s request for comment.