Netflix’s crackdown on password sharing might have been bad news for many of us still lurking in our friends’ accounts.

But for the streaming giant, the risky move has now translated into skyrocketing profits and a record number of subscribers.

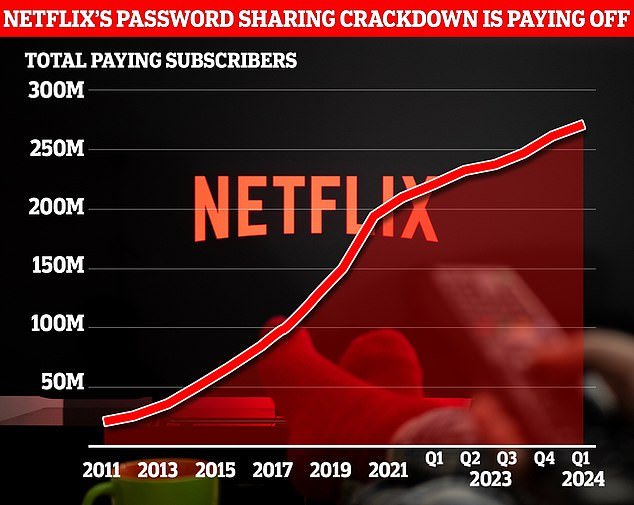

In the first three months of 2024, Netflix added 9.3 million new customers, bringing its total subscriber base to nearly 270 million.

And with subscription prices rising, this has given the company a quarterly profit of more than $2.3bn (£1.85bn), up from $1.3bn (£1bn) in 2023.

However, the company also revealed that it will no longer report its subscriber numbers starting next year, raising concerns that growth could soon slow.

Netflix’s crackdown on password sharing is paying off, as the streaming giant added another 9 million subscribers in the first three months of this year.

Despite the price increases, Netflix revealed that year-over-year subscriber growth was the highest in the last 12 months.

In the first three months of the year, subscriber growth increased to 16 percent compared to just 4.9 percent at this time last year.

Much of this growth is likely due to the streaming service’s recent crackdown on password sharing.

After investors were taken aback by Netflix’s first net loss of customers in 2020, the company implemented a shared payment system.

Instead of sharing an account with friends, households now have to pay a fee to allow other people to use their accounts.

By looking at your device’s IP address and location, Netflix checks to see if you’re part of the household paying the bill.

If the service detects what it thinks is a shared password, the device is locked and users have the option to create their own account.

Although considered a gamble by some at the time, this decision appears to have paid off.

At the end of last year, Netflix added 13 million new subscribers and raised its total to 260.28 million.

However, Netflix attributes its success to a series of successes that have driven engagement.

The company says its biggest hits included Society of the Snow with 98.5 million views, Fool Me Once with 98.2 million views and Griselda with 66.4 million views.

Netflix features a string of big hits, including crime drama ‘Griselda’ (pictured), which garnered 66.4 million views.

Netflix now maintains first place as the most popular streaming service in the world, surpassing Amazon Prime Video, which has 220 million subscribers worldwide.

This growth comes as Netflix has made several changes to the pricing of its subscriptions.

Currently, the cheapest plan with ads costs £4.99 in the UK ($6.99 in the US) compared to £10.99 in the UK ($15.49 in the US) for the Ad-free subscription.

Meanwhile, a premium plan, which includes HD streaming and additional members, now costs £17.99 in the UK ($23 in the US).

Netflix recently increased the price of the basic and premium plans by £1 in the UK ($2 in the US) and £2 in the UK ($2 in the US), respectively.

During the final months of 2023, a large portion of the 13 million new subscribers opted for the cheapest plan with ads.

In the 12 countries where ads are offered, including the United Kingdom and the United States, these plans accounted for 40 percent of new subscribers.

This latest 2024 update does not include a breakdown of subscribers by account type.

Netflix’s growth comes even after the company raised prices for most subscription tiers. However, this will be the last year the company reports subscriber numbers.

However, this will be one of the last times Netflix offers an idea of its subscriber numbers.

In the letter, Netflix announced that it would stop reporting subscriber numbers starting in the first quarter of 2025.

The company wrote: ‘In our early days, when we had little revenue or profit, membership growth was a strong indicator of our future potential.

“But now we are generating very substantial profits and free cash flow.”

As an explanation for the decision, the company also pointed to its new revenue streams, including the addition of paid shared accounts and different subscription tiers.

Netflix has scored big with international hits like The Snow Society (pictured), which won 12 Goya awards in Spain, the most of any film in two decades, and racked up 98.5 million views.

The letter also points to expansion into areas such as gaming and sports.

He adds: “We are very excited for our long-awaited live boxing match between Jake Paul and former heavyweight champion Mike Tyson, which we believe will become a must-see event this summer.”

However, the announcement caused concern among investors who saw the decision as a sign that subscriber growth would likely slow.

Facebook’s parent company Meta and X, formerly Twitter, stopped reporting subscriber numbers as their growth slowed.

Paolo Pescatore, technology and media analyst at PP Foresight, told MailOnline: ‘The move to stop disclosing quarterly subscriptions from next year will not go down well.

‘The following quarters could be difficult due to seasonality; which typically underperforms other sectors as people spend more time away from home.’

Immediately following the announcement, Netflix’s stock price dropped almost five percent.

However, the share price is still up 30 percent since the beginning of the year, close to the 2021 peak.