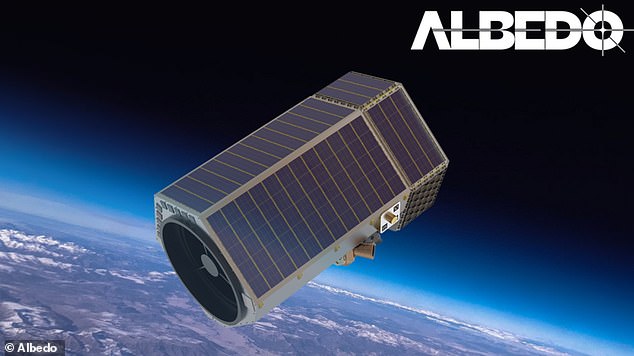

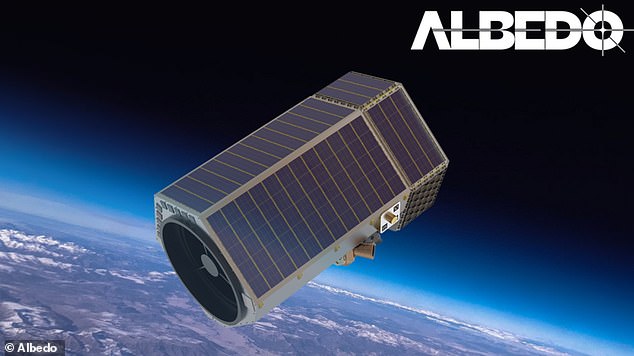

Privacy experts are sounding the alarm about a new satellite capable of spying on your every move set to launch in 2025.

The satellite, created by startup Albedo, is of such high quality that it can get close to people or license plates from space, raising concerns among experts that it will create a “big brother is always watching” scenario.

Albedo claims that the satellite will not have facial recognition software, but does not mention that it will refrain from taking images of people or protecting their privacy.

Albedo signed two separate $1 million contracts with the U.S. Air Force and the National Air and Space Intelligence Center to help the government monitor potential threats to U.S. national security.

Albedo claims that the satellite will not have facial recognition software, but does not mention that it will refrain from taking images of people or protecting their privacy.

The company raised $35 million last month to commercialize its very low Earth orbit (VLEO) satellite, on top of the $48 million it raised in September 2022.

Albedo co-founder Topher Haddad said he and his team hope to eventually have a fleet of 24 spacecraft.

“This is a giant camera in the sky that any government can use at any time without our knowledge,” Jennifer Lynch, general counsel at the Electronic Frontier Foundation, told the conference. New York Times.

“We should definitely be worried.”

“It’s bringing us one step closer to a world where Big Brother is watching,” added Jonathan C. McDowell, an astrophysicist at Harvard.

Albedo was founded in 2020 and began building its satellites the following year with its breakthrough technology, made possible by steps taken by the Trump administration to relax government regulations on civil satellite resolution in 2018.

Then-President Donald Trump updated the US Orbital Debris Mitigation Standard Practices and created new guidelines for satellite design and operations.

Under previous National Oceanic and Atmospheric Administration (NOAA) regulations, it was illegal to build a satellite that could see closer than 30 centimeters; At that distance he could only identify cars and houses, but not individual people.

But under Trump’s new directive, satellites were allowed to track objects in space about 10 centimeters wide, which would improve how the Air Force could catalog objects.

Satellites use nighttime thermal infrared imaging to determine whether an object is passive or active and whether it is moving.

Most satellites orbit between 160 km (100 miles) and 2,000 km (1,242 miles) from Earth, and all can currently locate objects about 30 centimeters (one foot) in diameter.

From this distance, satellites can only see things like road signs and aircraft tail numbers, but Albedo aims to get even closer.

The company’s satellites will create images just 10 centimeters (four inches) in diameter, with telescopic mirrors polished to the size of 1/1000th the size of a human hair.

Albedo’s satellites will orbit up to 100 miles from the Earth’s surface and could be used for life-saving measures, such as helping authorities map disaster zones. Experts are concerned that they will be used to track people and affect their privacy.

Centimeter smaller images mean that the images will not be as pixelated, allowing those using the satellite to see objects, places and people more accurately.

The satellites will orbit up to 100 miles from the Earth’s surface and could be used for life-saving measures, such as helping authorities map disaster zones.

Albedo satellites use an intuitive interface to monitor and trend your existing imagery, and its cloud-centric delivery pipeline can collect information in less than an hour.

Haddad addressed concerns that satellites would destroy people’s right to privacy in a public forumwriting that the company is “well aware of the privacy implications and potential for abuse/misuse” and expects it to be “an ongoing issue and one that will evolve over time.”

He confirmed that the satellite’s 10-centimeter resolution will be able to identify people, but said the company will only approve customers on a case-by-case basis and will build “robust internal tools to find bad actors, as well as the obvious measures of adding punitive clauses to our terms and conditions.” conditions.’

In March 2022, Albedo received a $1.25 million contract with the US Air Force for the second phase of development to determine whether the satellites could identify missile tubes on warships, hardware on electronic pickup trucks and fairings on fighter jets.

The company also said its satellites can help governments “monitor hotspots, eliminate uncertainty and mobilize with speed.”

Albedo’s satellites will float just 100 miles above Earth’s surface and capture small details like missile tubes on warships, hardware in electronics vans and fairings on fighter jets.

In April 2023, Albedo signed another $1.25 million contract with the National Air and Space Intelligence Center, which assesses foreign threats, for nighttime thermal infrared imaging that combines visible and thermal imaging to detect whether an object is active or passive and whether it is moving or stationary.

“We are committed to accelerating the Air Force and Space Force’s ability to understand their performance against our problem sets and apply our capabilities in orbit,” said Joseph Rouge, Space Force Deputy Director of Intelligence, Surveillance and Reconnaissance. USA

“Night thermal infrared imaging can help our intelligence analysts, warfighters, decision makers and field operators resolve complex emerging threats day and night,” he added.

Then, in December, the company signed a two-and-a-half-year contract contract with the National Reconnaissance Office to use the satellite’s thermal infrared data to provide “geospatial intelligence for climate, food security and the environment through daily surface temperature data and analysis.”

Haddad claimed the technology will help curb climate change by showing which regions are most affected, while saying it “can be used simultaneously to support our national defense mission and mitigate our global environmental and climate crisis.”

This latter reasoning is alarming to experts who say that while VLEO satellites may be useful in some scenarios, the potential for overreach and human rights violations is increasingly worrying.

John Pike, director of Global Security.org, told New York Times that Albedo is minimizing the potential effects of creating a satellite that can distinguish human shapes.

“You’re going to start seeing people,” he told the outlet. “You’re going to see more than points.”

In the past, private satellites have proven useful for research and commercial use and have helped the government with issues such as “tracking global oil reserves, measuring deforestation in the Amazon, and identifying dedicated ships.” to illegal fishing,” according to the Electronic Frontier Foundation (EFF). ).

“They have also been used to illuminate human rights abuses, providing evidence of labor camps in North Korea and Boko Haram attacks in Nigeria,” he added, addressing proposed rules for licensing private satellites.

But the EFF said that apart from these positive uses, more detailed satellites could infringe human rights, saying: “The same technology that exposes human rights abuses can also be used to perpetuate them.”

Experts fear this could mean privacy becomes a thing of the past and government agencies can see anyone, anytime, anywhere without their knowledge.

“This is a giant camera in the sky that any government can use at any time without our knowledge,” Jennifer Lynch, general counsel at the EFF, told the New York Times, adding, “We should definitely be worried.”