- Eight-year-old Oumar crossed from Libya to Italy with almost 100 other people on a boat

<!–

<!–

<!– <!–

<!–

<!–

<!–

An eight-year-old boy who traveled 3,500 miles from Africa to Europe so he could “go to school” is doing well and has “an amazing smile,” his new foster carers said.

Little Oumar was picked up by a humanitarian ship called the Ocean Viking a week ago in the Mediterranean while crossing from Libya to Italy with nearly 100 other people on a boat.

The ship then sailed to the Italian port of Ancona on the Adriatic Sea, where he was picked up by volunteers and temporarily housed with other children.

An aid worker who cares for him said: “He has an amazing smile and is adjusting well to the other children.”

‘He plays football, draws and paints and really wants to go to school.

An aid worker who cares for him said: “He has an amazing smile and is adjusting well to the other children.”

Little Oumar was picked up a week ago by a humanitarian ship called the Ocean Viking in the Mediterranean while crossing from Libya to Italy with nearly 100 other people on a boat.

‘Intellectually he is very intelligent for his age and when he starts school he will quickly learn Italian.

“He’s talked to his parents and they know he’s in good hands.”

Oumar’s heartbreaking story emerged earlier this week after the Ocean Viking arrived in Ancona after a three-day voyage from the coast of Libya.

He told astonished rescuers that he left Mali in November and crossed Africa to Libya with a friend and made an initial attempt but was captured by the Libyan coastguard.

The two were locked up in a Libyan prison in Ain Zara before managing to escape, hiding in a garbage truck before boarding a boat heading to Europe.

Oumar told his rescuers that he had earned money working as a painter and welder to save enough money to live.

He added that being in Libya “was difficult” because he was “black.”

His home was a town called Tambaga, in western Mali, where he lived with his parents, but he fled after a jihadist group attacked the area and separated them.

He continued walking and eventually ended up in Libya, where he worked for several weeks before making a first failed attempt to cross the sea.

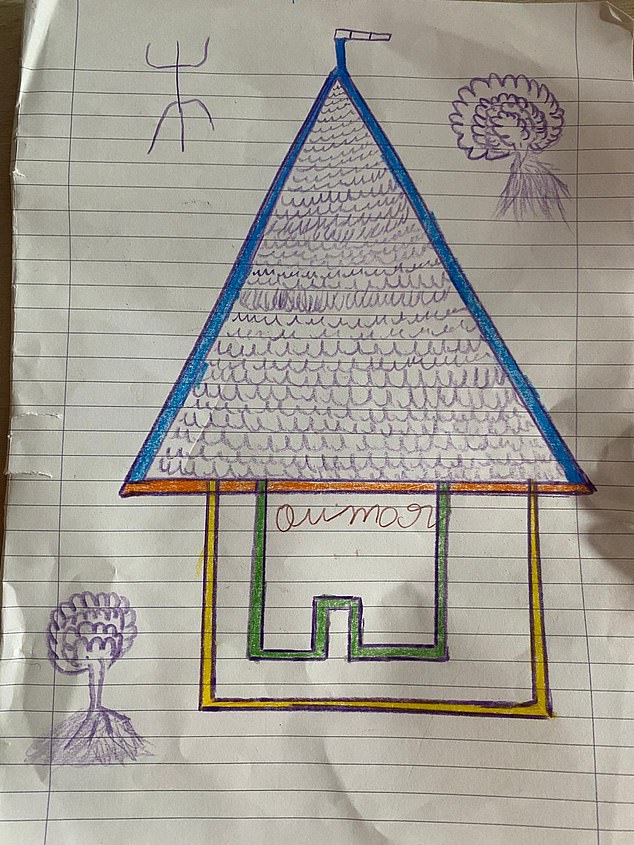

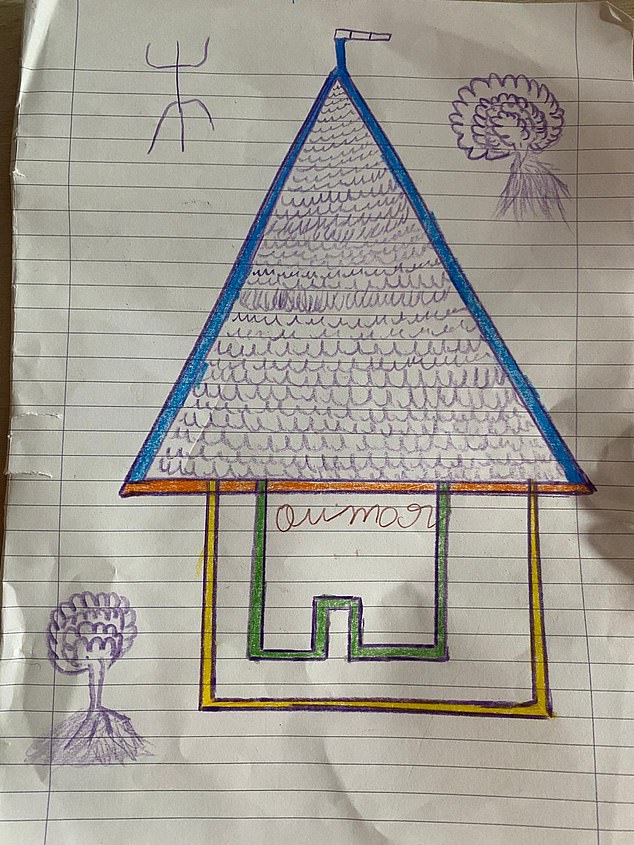

Images drawn by Oumar of the house where he stays and the boat on which he arrived

Oumar left his small village near Tambaga, in western Mali, and then traveled on foot across the Sahara before sailing towards Italy.

Earlier this month, migrants were helped to evacuate a partially deflated rubber boat. stock image

The Libyan coast guard detained him and imprisoned him before he managed to escape in the garbage truck.

While in jail he was beaten and suffered a broken foot that was diagnosed by doctors in Italy.

Since his arrival he has settled into a temporary home run by a charity called CEIS, where he will remain until he finds a permanent foster family.

Images shared with MailOnline show him drawing pictures with other children, including a stick drawing of a heartbroken toddler.

Others include photographs of the house where he is staying and the boat on which he arrived in Ancona.