Toronto Raptors player Jontay Porter has been banned for life from the NBA after a league investigation found he revealed confidential information to sports bettors and bet on games, including betting on the Raptors to lose.

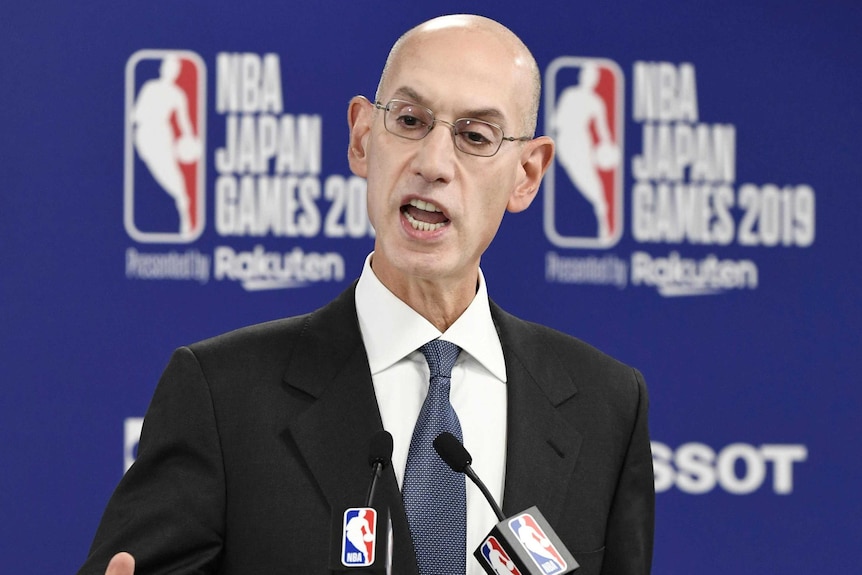

Porter is the second person sanctioned by commissioner Adam Silver for violating league rules.

The other was now-former Los Angeles Clippers owner Donald Sterling in 2014, shortly after Silver took over.

In making the announcement, Silver called Porter’s actions “brazen.”

“There is nothing more important than protecting the integrity of NBA competition for our fans, our teams and everyone associated with our sport, which is why Jontay Porter’s flagrant violations of our playing rules are punished.” more severe,” Silver said.

The investigation began once the league learned from “licensed sports betting operators and an organization that monitors legal betting markets” about unusual playing patterns surrounding Porter’s performance in a March 20 game against Sacramento.

The league determined that Porter gave a bettor information about his own health status before that game and said another individual, known to be an NBA bettor, placed an $80,000 bet that Porter would not reach the set numbers. for him. on parlays through an online sportsbook.

That bet would have won $1.1 million.

Porter withdrew from that game after less than three minutes, citing illness, and none of his statistics met the totals set on the parlay. The $80,000 bet was frozen and unpaid, the league said, and the NBA launched an investigation soon after.

“You don’t want this for the kid, you don’t want this for our team and we don’t want this for our league, that’s for sure,” Raptors president Masai Ujiri said shortly before the NBA announced Porter’s sanction.

“My first reaction is obviously surprise, because none of us, I don’t think anyone, saw this coming.”

Later, after the NBA revealed the ban, the Raptors said they “fully support the league’s decision to ban Jontay Porter from the NBA and are grateful for the quick resolution of this investigation. We will continue to cooperate with all investigations in course”.

The league has partnerships and other relationships with more than two dozen gaming companies, many of which advertise during NBA games in various ways. Silver himself has long been an advocate for legal sports betting, but the league has very strict rules for players and employees regarding betting.

The league also determined that Porter, brother of Denver Nuggets forward Michael Porter Jr, placed at least 13 bets on NBA games using another person’s betting account.

Bets ranged from $15 to $22,000; The total wagered was $54,094 and generated a payout of $76,059, or net winnings of $21,965.

Those bets did not involve any games in which Porter played, the NBA said. But three of the bets were multi-game parlays, including a bet in which Porter, who was not playing in the games involved, bet that the Raptors would lose. All three bets were lost.

“While legal sports betting creates transparency that helps identify suspicious or abnormal activity, this matter also raises important questions about the adequacy of the regulatory framework currently in place, including the types of bets offered on our games and players,” Silver said. .

“Working closely with all relevant industry stakeholders, we will continue to work diligently to safeguard our league and our game.”

AP

Sports content to make you think… or allow you not to. A newsletter delivered every Friday.